Alibaba Unveils Qwen3.5: 397B MoE Model Sparks The Agentic AI Race

Alibaba Unveils Qwen3.5 397B MoE Model Sparks The Agentic AI Race | Image With Reuters

Alibaba has officially unveiled Qwen3.5, and bhai this is not just another chatbot update. The February 16, 2026 launch, right before Lunar New Year, clearly signals China’s aggressive push into the agentic AI era. Unlike traditional large language models that mostly respond to prompts, Qwen3.5 is built to take action. It can execute multi step tasks, interact visually with mobile and desktop apps, and handle massive context windows.

With a 397 billion parameter architecture using Mixture of Experts and hybrid linear attention, Alibaba claims the model delivers frontier level reasoning at up to 60 percent lower cost. Naturally, comparisons with GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro have started. But the bigger story is not just benchmarks. It is efficiency, openness, and real world automation.

Key Takeaways

- Qwen3.5 launched on February 16, 2026 ahead of Lunar New Year

- Flagship open weight model: Qwen3.5-397B-A17B with 397B total parameters

- Only 17B parameters activated per forward pass using sparse MoE

- Up to 8.6x to 19x higher decoding throughput than Qwen3-Max

- Around 60 percent lower usage cost

- Supports up to 1M token context in hosted Qwen3.5-Plus

- Processes videos up to 2 hours

- Covers 201 languages and dialects

- Released under Apache 2.0 license

- Strong positive developer response on X

The Shift From Chatbots To Agentic AI

For the past two years, the AI race was mostly about who has the smartest chatbot. Now the game has changed. The focus is on agentic AI. That means systems that do tasks instead of just replying with text.

Qwen3.5 introduces visual agentic capabilities. It can interact with graphical user interfaces on phones and desktops. This means it can open apps, navigate menus, fill forms, and automate workflows with minimal supervision. This shift puts Alibaba directly in competition with companies building AI agents for productivity, SaaS automation, and enterprise tools.

Many Western companies are also moving in this direction. But Alibaba is positioning Qwen3.5 as cheaper and more efficient. That combination is powerful.

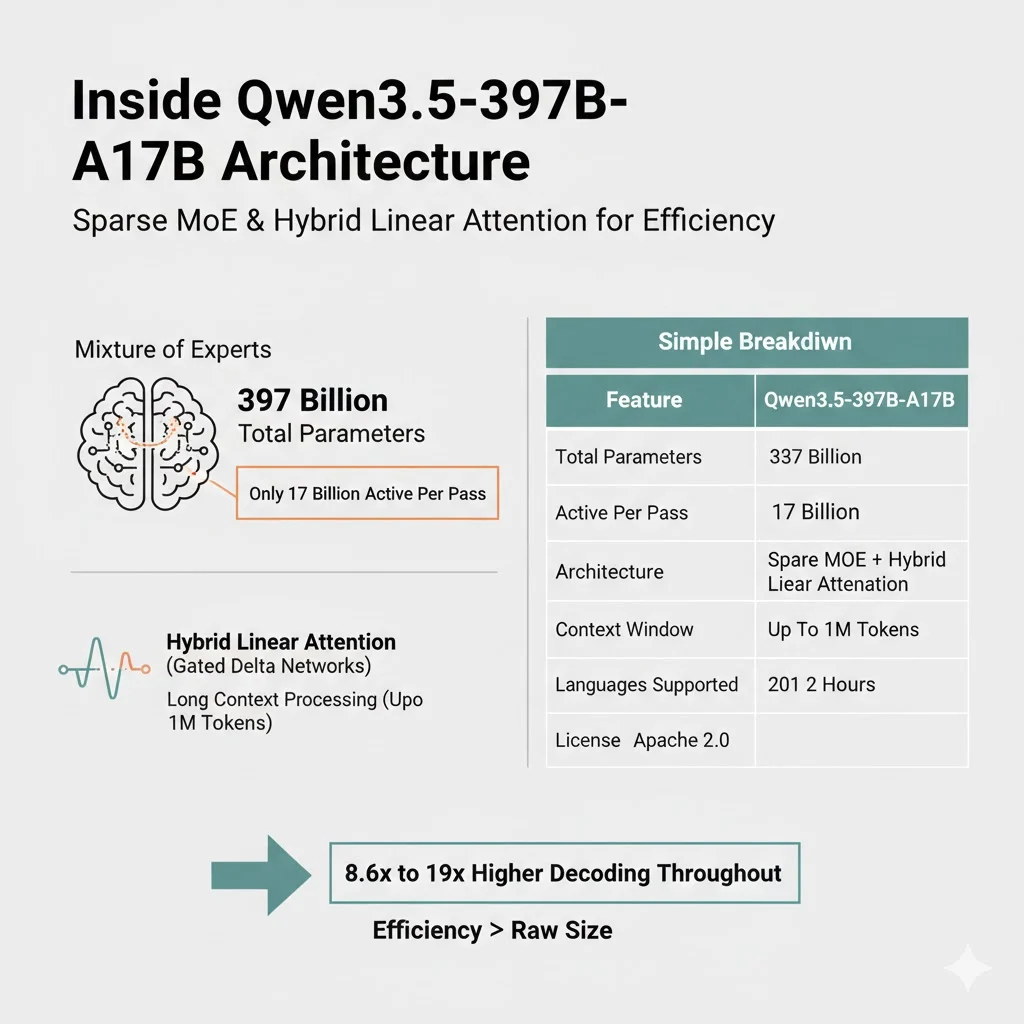

Inside Qwen3.5-397B-A17B Architecture

The flagship open weight model is called Qwen3.5-397B-A17B. The numbers look massive. 397 billion total parameters. But here is the twist.

It uses a sparse Mixture of Experts architecture. Only 17 billion parameters are activated per forward pass. That means you get the capability of a very large model without paying the full compute cost every time.

On top of that, Alibaba integrates hybrid linear attention using Gated Delta Networks. This improves efficiency for long context processing.

Here is a simple breakdown:

| Feature | Qwen3.5-397B-A17B |

|---|---|

| Total Parameters | 397 Billion |

| Active Per Pass | 17 Billion |

| Architecture | Sparse MoE + Hybrid Linear Attention |

| Context Window | Up to 1M Tokens |

| Video Processing | Up to 2 Hours |

| Languages Supported | 201 |

| License | Apache 2.0 |

This architecture explains why Alibaba claims 8.6x to 19x higher decoding throughput compared to Qwen3-Max. Efficiency is becoming more important than just raw size.

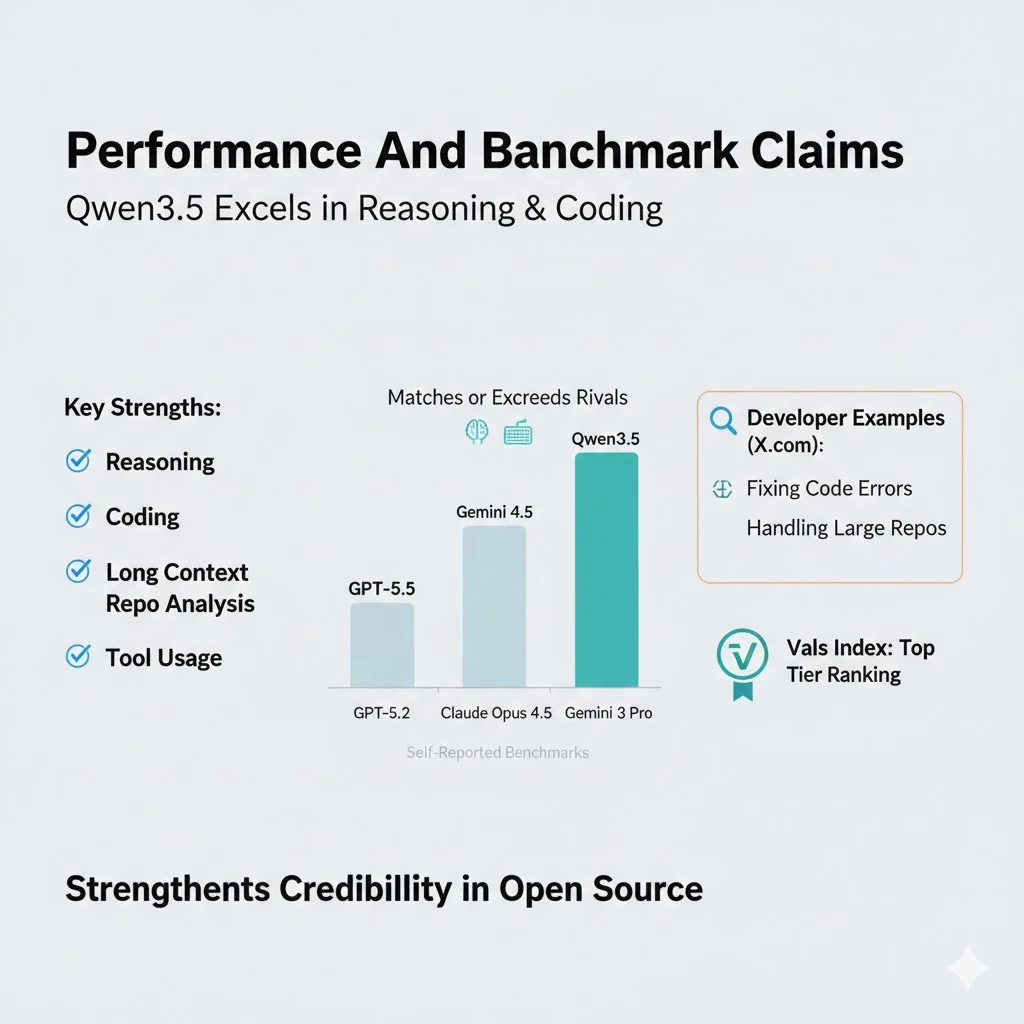

Performance And Benchmark Claims

Alibaba states that Qwen3.5 matches or exceeds models like GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro in various reasoning and coding benchmarks. These are self reported benchmarks, so independent verification will matter.

However, what stands out is not just reasoning. It is coding, long context repo analysis, and tool usage. Developers on X have shared examples of fixing code errors and handling large repositories smoothly.

On open weight leaderboards like Vals Index, Qwen3.5 reportedly ranks in the top tier. That strengthens its credibility in the open source ecosystem.

Qwen3.5-Plus And Hosted API Strategy

Alibaba also released Qwen3.5-Plus through Alibaba Cloud Model Studio. This hosted version includes built in tools and adaptive tool calling. It is designed for production ready deployment.

The hosted version supports up to 1 million tokens context window. That is huge for enterprise use cases like legal document review, long financial filings, or large codebases.

The dual strategy is smart:

- Open weight version for developers who want full control

- Hosted API version for enterprises that want managed infrastructure

This reduces dependency on Western cloud providers and strengthens Alibaba Cloud’s ecosystem.

Public Opinion On X

Now this is where things get interesting. Reactions on X have been largely positive.

The official announcement from @Alibaba_Qwen received thousands of likes and reposts. Developers are excited about the multimodal capabilities and agentic features.

One developer mentioned running a 4 bit quantized version on a Mac Studio M3 Ultra at around 35 tokens per second. He described the output as very usable and stable during long tasks. That kind of real world feedback matters more than marketing slides.

Analysts are also discussing economic advantages. Some posts describe Qwen3.5 as an asymmetric opportunity. High performance at 60 percent lower cost can make it attractive in emerging markets and non Western regions.

Multilingual support has also received praise. Users highlighted strong Russian language handling and better Cyrillic rendering in image generation. Supporting 201 languages and dialects is a big jump from earlier versions.

Community integrations started almost immediately. Developers added support to tools like LM Studio and MLX. Quick ecosystem adoption shows strong developer trust.

China’s Intensifying AI Competition

Qwen3.5 does not exist in isolation. China’s AI space is moving very fast.

Companies like ByteDance with Doubao 2.0, Zhipu with GLM-5, MiniMax with M2.5, and DeepSeek are pushing rapid iterations. Launching before key holidays is also strategic. It drives adoption and media attention.

The trend is clear:

- From chatbot to agent

- From pure text to multimodal

- From massive size to efficient MoE

- From closed APIs to open weight releases

Open weight models democratize access. Developers can fine tune and deploy locally without heavy cloud dependency. That builds global developer goodwill.

Why Efficiency Matters More Than Ever

Compute is expensive. GPU supply is tight. Energy costs are rising. So capability per unit of inference cost is becoming the real metric.

Qwen3.5 focuses exactly on that. Sparse activation, hybrid attention, long context optimization. These are engineering choices aimed at real world scalability.

If a model delivers near frontier reasoning at significantly lower cost, enterprises will notice. Startups will notice. Governments will notice.

This efficiency narrative is shaping the next phase of the AI race.

What This Means For Developers And Enterprises

For developers, Qwen3.5 offers:

- Open weight access under Apache 2.0

- Strong coding and repo analysis

- Local deployment possibilities

- Multilingual support

For enterprises, it offers:

- Hosted API with adaptive tool calling

- Massive context window

- Automation through agentic workflows

- Lower operational cost

The real test will be large scale deployments. Benchmarks are one thing. Production reliability is another. But early signals from the developer community look promising.

Is China Closing The AI Gap

Many analysts previously said Chinese AI models were months behind Western rivals. With releases like Qwen3.5, that gap appears to be narrowing.

The focus on practical breakthroughs instead of just headline parameter counts is smart. Cheaper, faster, agentic, openly accessible. These are the features that matter in real world adoption.

Excitement is high around productivity automation and global developer ecosystems. Qwen3.5 has clearly entered trending AI discussions worldwide.

Whether it truly surpasses Western models will depend on independent testing and long term reliability. But one thing is clear. The AI race is no longer one sided.

Conclusion

Alibaba unveiling Qwen3.5 is more than a product update. It represents a shift toward agentic, multimodal, and efficiency driven AI systems. With a 397B parameter MoE architecture, 1M token context window, 201 language support, and strong developer enthusiasm, Qwen3.5 positions itself as a serious global contender.

The next few months will reveal how enterprises and developers adopt it. But right now, in the AI world, Qwen3.5 has definitely made some serious noise.

Share This Post